The TeamPCP hacker group is threatening to leak source code from the Mistral AI project unless a buyer is found for the data.

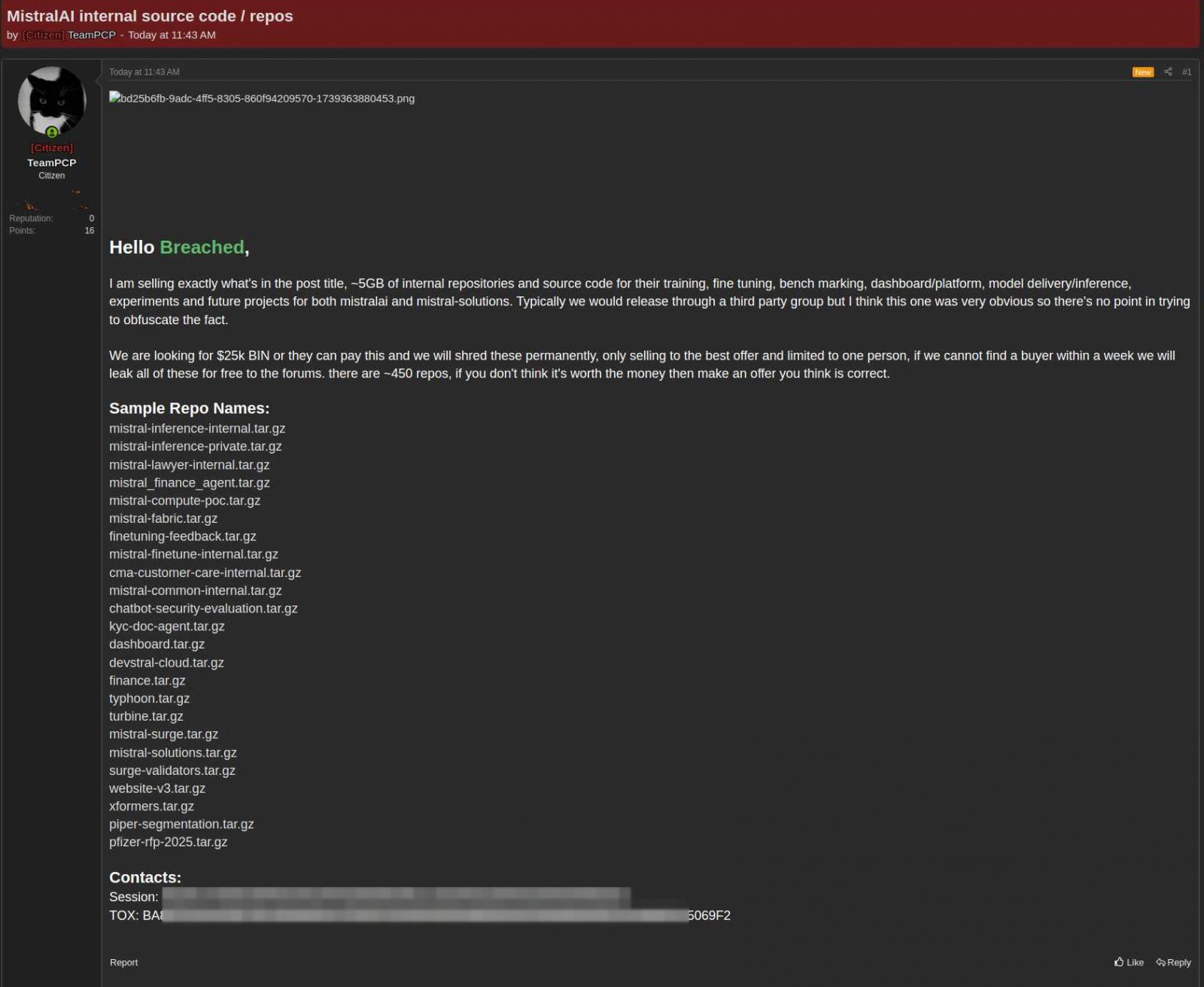

In a post on a hacker forum, the threat actor is asking $25,000 for a set of nearly 450 repositories.

Mistral AI is a French artificial intelligence company founded by former researchers from Google’s DeepMind and Meta, which provides open-weight large language models (LLMs), both open source and proprietary.

In a statement to BleepingComputer, Mistral AI confirmed that hackers compromised a codebase management system after the Mini Shai-Hulud software supply-chain attack.

The incident started with the compromise of official packages from TanStack and Mistral AI through stolen CI/CD credentials and legitimate workflows.

Then it spread to hundreds of other software projects on the npm and PyPI registries, including UiPath, Guardrails AI, and OpenSearch.

“They [the hackers] contaminated some of our SDK packages for a brief period,” the company said.

TeamPCP claims to have stolen nearly 5 gigabytes “of internal repositories and source code” that Mistral uses for training, fine-tuning, benchmarking, model delivery, and inference in experiments and future projects.

“We are looking for $25k BIN or they can pay this and we will shred these permanently, only selling to the best offer and limited to one person, if we cannot find a buyer within a week we will leak all of these for free to the forums,” the hackers said.

The threat actor appears open to negotiations, stating that the asking price is flexible and that interested buyers are free to submit what they believe is a fair offer for the 450 repositories offered for sale.

source: KELA

Mistral AI told BleepingComputer that the TeamPCP managed to contaminate some of the company’s software development kit (SDK) packages.

In an advisory published earlier this week, the company said that the breach occurred after a developer device was impacted by the TanStack supply-chain attack.

However, Mistral states that the forensic investigation determined that the impacted data was not part of the core code repositories.

“Neither our hosted services, managed user data, nor any of our research and testing environments were compromised,” Mistral told BleepingComputer.

Earlier today, OpenAI also confirmed that the TanStack supply-chain impacted systems of two of its employees who had access to “a limited subset of internal source code repositories.”

A small set of credentials was stolen from the repositories, but the investigation found no evidence that they were used in additional attacks.

OpenAI responded by rotating the code-signing certificates exposed in the incident and warning macOS users that they must update their OpenAI desktop apps before June 12, or the software may fail to launch and stop receiving updates.

The Validation Gap: Automated Pentesting Answers One Question. You Need Six.

Automated pentesting tools deliver real value, but they were built to answer one question: can an attacker move through the network? They were not built to test whether your controls block threats, your detection rules fire, or your cloud configs hold.

This guide covers the 6 surfaces you actually need to validate.